Documentation Index

Fetch the complete documentation index at: https://docs.openhome.com/llms.txt

Use this file to discover all available pages before exploring further.

OpenHome Ability Templates

Five templates. Four ability categories. Unlimited possibilities.

OpenHome ability templates are starter blueprints. They are intentionally minimal and focused on runtime architecture, not polished UX.

What OpenHome Actually Is

OpenHome runs AI agents that can trigger Python abilities. Those abilities can:

- control local tools through bridge methods

- call APIs and external services

- process ambient audio in loops

- store and share state over time

- run continuously as background daemons

LLMs are central in this model. They route, transform, and summarize, while abilities execute concrete actions.

Ability Categories

| Category | Trigger | Lifecycle | Entry File |

|---|

| Skill | User hotword | Runs once, exits | main.py |

| Brain Skills | Agent brain routing | Runs on demand when brain delegates work | main.py |

| Background Daemon | Automatic on session start | Runs continuously until session end | background.py |

| Local | User hotword | Runs on-device with hardware access | main.py + devkit_functions.py |

- Brain Skills templates are still being finalized.

Background Daemon Entry Contract

For daemon templates, background.py must be named exactly background.py.

def call(self, worker, background_daemon_mode: bool):

self.worker = worker

self.background_daemon_mode = background_daemon_mode

self.capability_worker = CapabilityWorker(self)

self.worker.session_tasks.create(self.background_loop())

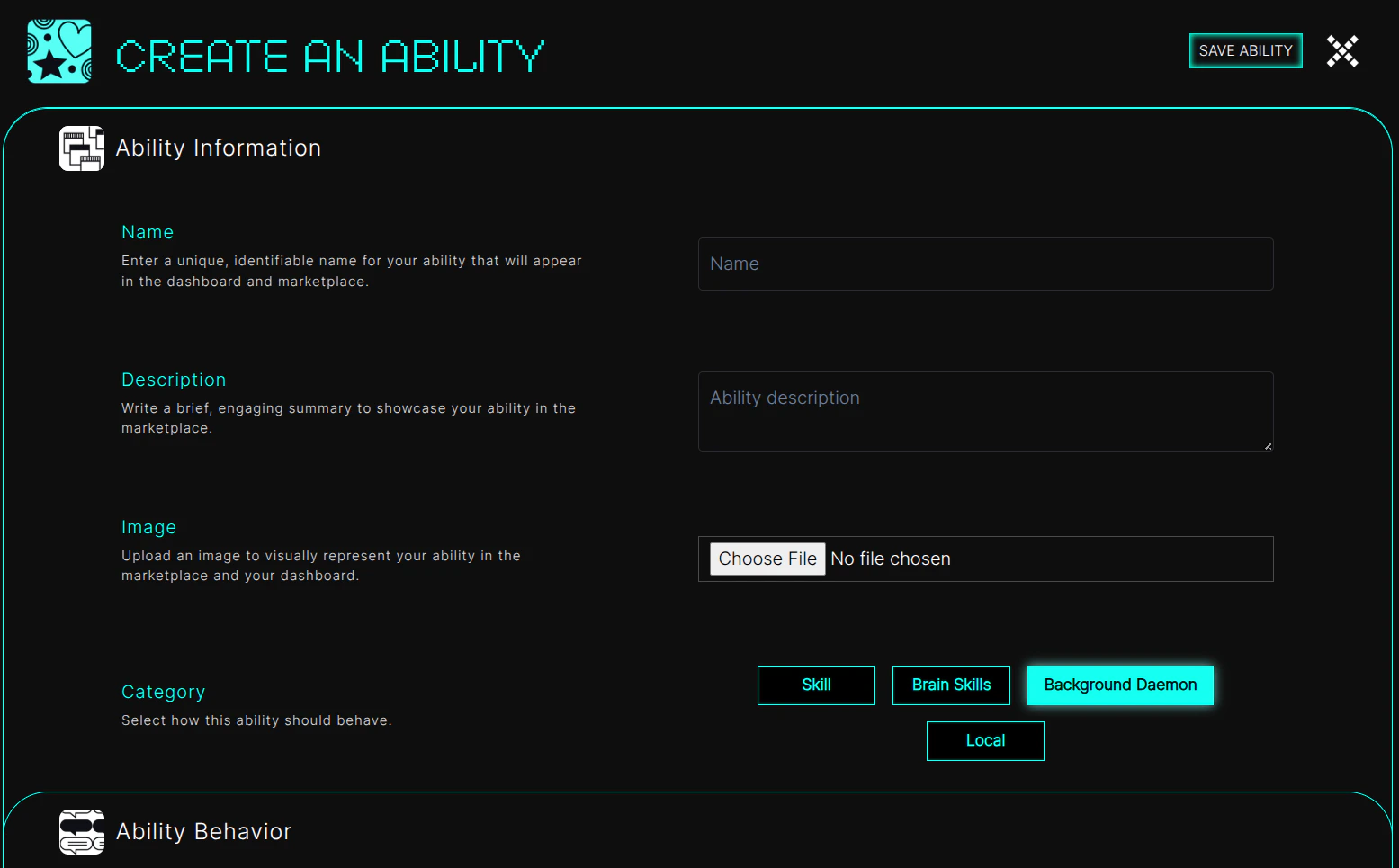

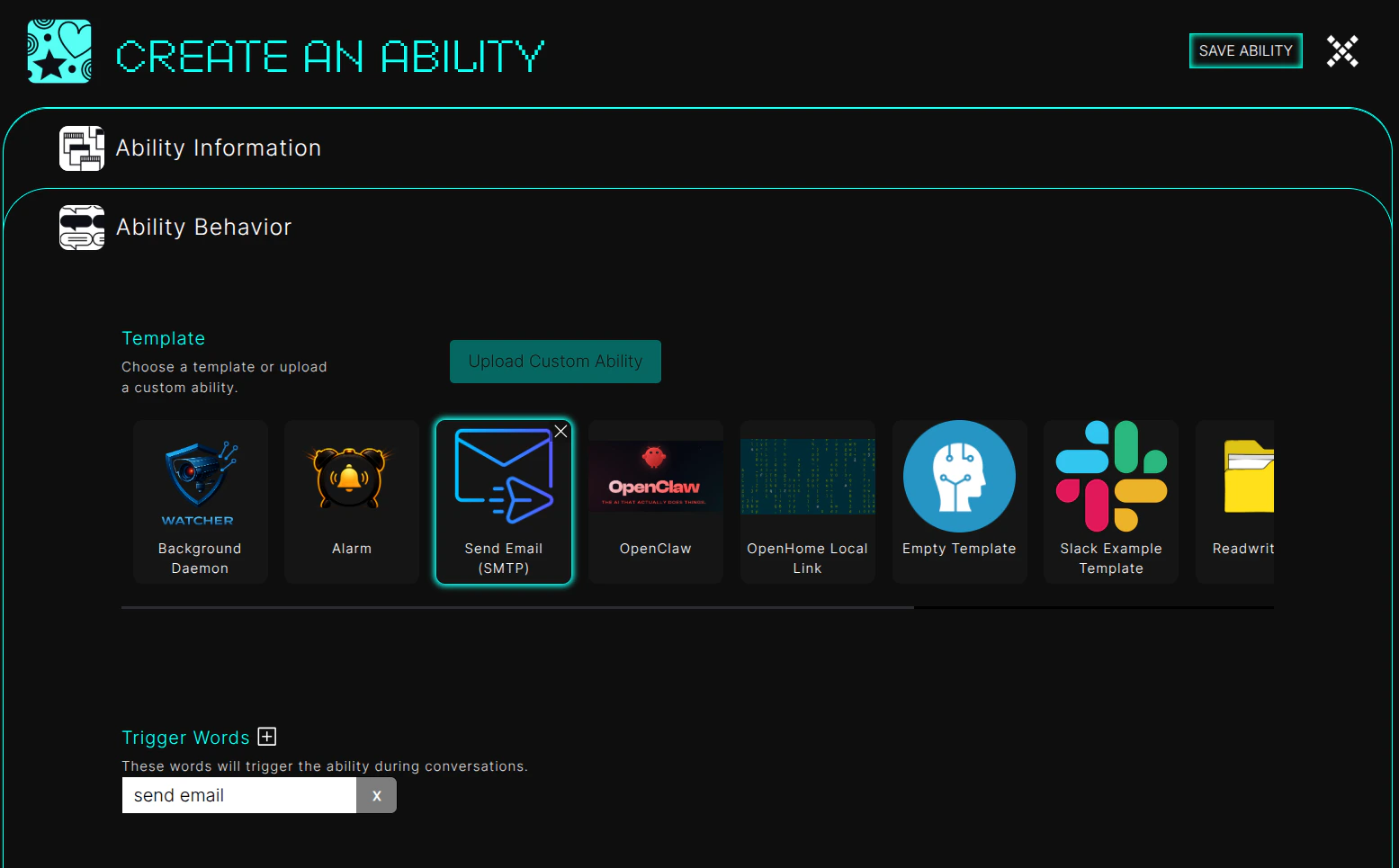

How To Use Templates In Dashboard

- Click Create on

https://app.openhome.com/dashboard/home.

- Select Agent Ability.

- Fill the Create Ability form and choose any category for your ability.

- Fill Ability Behavior, then choose the template you want.

- Click Save Ability.

Template Directory

templates/

│

├── basic-template/ ← Minimal Skill skeleton

├── api-template/ ← Call an external REST API

├── loop-template/ ← Multi-turn looping Skill

│

├── SendEmail/ ← Fire-and-forget action

├── Local/ ← LLM-to-local-command translation

├── OpenClaw/ ← Route request to OpenClaw

│

├── Background/ ← Standalone background daemon

├── Alarm/ ← Skill + Background daemon combo

│

└── ReadWriteFile/ ← Shared file storage / IPC pattern

Template README Sources

Start Here Templates

basic-template (Skill, minimal)

Purpose: absolute minimum lifecycle implementation.

async def run(self):

await self.capability_worker.speak("Hi! How can I help you today?")

user_input = await self.capability_worker.user_response()

response = self.capability_worker.text_to_text_response(

f"Give a short, helpful response to: {user_input}"

)

await self.capability_worker.run_io_loop(response + " Are you satisfied with the response?")

await self.capability_worker.speak("Thank you for using the advisor. Goodbye!")

self.capability_worker.resume_normal_flow()

api-template (Skill, external API)

Purpose: ask user input, call API, summarize result, exit cleanly.

async def run(self):

await self.capability_worker.speak("Sure! What would you like me to look up?")

user_input = await self.capability_worker.user_response()

result = await self.fetch_data(user_input)

if result:

spoken = self.capability_worker.text_to_text_response(

f"Summarize this data in one short sentence for voice: {result}"

)

await self.capability_worker.speak(spoken)

else:

await self.capability_worker.speak("Sorry, I couldn't get that information right now.")

self.capability_worker.resume_normal_flow()

loop-template (Skill, long-running loop)

Purpose: interactive multi-turn skill with explicit exit words.

EXIT_WORDS = {"stop", "exit", "quit", "done", "cancel", "bye", "goodbye", "leave"}

async def run(self):

await self.capability_worker.speak("I'm ready to help. Ask me anything, or say stop.")

while True:

user_input = await self.capability_worker.user_response()

if not user_input:

continue

if any(word in user_input.lower() for word in EXIT_WORDS):

await self.capability_worker.speak("Goodbye!")

break

response = self.capability_worker.text_to_text_response(

f"Respond in one short sentence: {user_input}"

)

await self.capability_worker.speak(response)

self.capability_worker.resume_normal_flow()

The Five Core Templates

1. SendEmail (Skill · Fire-and-forget)

What it demonstrates:

- one trigger, one action, one status response

- synchronous SDK call in async flow

- required handoff to

resume_normal_flow()

async def email_sender(self):

status = self.capability_worker.send_email(

host="smtp.gmail.com",

port=465,

sender_email="test@gmail.com",

sender_password="app-password",

receiver_email="receiver_test@gmail.com",

cc_emails=[],

subject="Test Email",

body="Hello from OpenHome!",

attachment_paths=["testfile.txt"],

)

await self.capability_worker.speak(

"Email has been sent successfully." if status else "Failed to send email"

)

self.capability_worker.resume_normal_flow()

- collect recipient/body from user, do not hardcode

- add confirmation step before send

- secure credential handling

2. OpenHome-local (Skill · LLM-as-translator)

What it demonstrates:

- user speech to terminal command generation

- local execution bridge with

exec_local_command()

- second LLM pass to explain execution result

user_inquiry = await self.capability_worker.wait_for_complete_transcription()

terminal_command = self.capability_worker.text_to_text_response(

user_inquiry, [], self.get_system_prompt()

).strip()

await self.capability_worker.speak(f"Running command: {terminal_command}")

response = await self.capability_worker.exec_local_command(terminal_command)

result = self.capability_worker.text_to_text_response(

f"check if the command successfully ran? response is: {response}",

[{"role": "user", "content": user_inquiry}],

"Explain success/failure in simple spoken language.",

)

await self.capability_worker.speak(result)

self.capability_worker.resume_normal_flow()

- command allowlist / denylist

- confirmation before high-risk commands

- timeout and error boundaries

3. openclaw-template (Skill · Sandbox escape)

What it demonstrates:

- pass-through request routing to OpenClaw

- no terminal generation layer, direct local AI routing

user_inquiry = await self.capability_worker.wait_for_complete_transcription()

await self.capability_worker.speak("Sending Inquiry to OpenClaw")

response = await self.capability_worker.exec_local_command(user_inquiry)

await self.capability_worker.speak(response["data"])

self.capability_worker.resume_normal_flow()

- robust response validation

- fallback for unmatched tools

- timeout + retry strategy

4. Background + Alarm (Background daemon pattern)

What it demonstrates:

- continuous background loop with

session_tasks.sleep()

- reading session history / file state periodically

- skill + daemon coordination through shared storage

Background loop pattern:

async def first_function(self):

while True:

history = self.capability_worker.get_full_message_history()[-10:]

for message in history:

self.worker.editor_logging_handler.info(

f"Role: {message.get('role','')}, Message: {message.get('content','')}"

)

await self.worker.session_tasks.sleep(20.0)

if now >= target_dt:

await self.capability_worker.send_interrupt_signal()

await self.capability_worker.play_from_audio_file("alarm.mp3")

await self._mark_alarm_triggered(alarms, alarm_id)

- use

session_tasks.sleep(), not asyncio.sleep()

- do not call

resume_normal_flow() in daemon loops

- interrupt before daemon speech/audio when needed

- persist JSON safely (see

ReadWriteFile rules)

- background file name must be exactly

background.py

5. loop-template a.k.a. Log-My-Life pattern (Skill · Ambient observer)

What it demonstrates:

- long-running session loop

- periodic capture/process/respond

- explicit phrase-based exit

Core ambient loop shape:

while self.is_running:

self.capability_worker.start_audio_recording()

exit_requested = await self.wait_for_interval_or_exit()

self.capability_worker.stop_audio_recording()

audio_bytes = self.capability_worker.get_audio_recording()

await self.process_chunk(audio_bytes, recording_length)

if exit_requested:

self.is_running = False

get_audio_recording() may return cumulative recording data.- If chunking externally, track prior byte offset and slice new data before transcription/analysis.

Utility Pattern: ReadWriteFile

Purpose: safe shared-state and IPC pattern across Skill and Daemon.

if await self.capability_worker.check_if_file_exists("temp_data.txt", in_ability_directory=False):

await self.capability_worker.write_file(

"temp_data.txt",

f"\n{time()}: {user_response}",

in_ability_directory=False

)

else:

await self.capability_worker.write_file(

"temp_data.txt",

f"{time()}: {user_response}",

in_ability_directory=False

)

file_data = await self.capability_worker.read_file("temp_data.txt", in_ability_directory=False)

- always use delete-then-write when replacing full file content

- append mode is still good for

.txt and .log activity streams

Utility Pattern: .md Context Injection

Purpose: feed ambient context into the Agent prompt through persistent markdown files.

content = "## Emotional State\n- Current: frustrated (confidence: 0.87)\n"

exists = await self.capability_worker.check_if_file_exists("audio_emotion.md", in_ability_directory=False)

if exists:

await self.capability_worker.delete_file("audio_emotion.md", in_ability_directory=False)

await self.capability_worker.write_file("audio_emotion.md", content, in_ability_directory=False)

- only persistent

.md files are injected into Agent context

- reserve

user_profile.md and user_summary.md for platform memory background ownership

- keep each injected

.md file short and current-state focused

- expect ~60-90 seconds before a newly written

.md file is reflected in responses

See: Agent Memory & Context Injection

Utility Pattern: Key-Value Context Storage

Purpose: structured state for preferences, conversation workflows, feature flags, and cache metadata.

Notes:

- Key-value methods are synchronous (do not

await).

- Store JSON dictionaries (

dict) as values.

existing = self.capability_worker.get_single_key("conversation_456_state")

if existing:

self.capability_worker.update_key(

"conversation_456_state",

{"step": "confirmed", "intent": "book_flight", "destination": "Dubai"},

)

else:

self.capability_worker.create_key(

"conversation_456_state",

{"step": "awaiting_confirmation", "intent": "book_flight", "destination": "Dubai"},

)

create_key(key, value)update_key(key, value)delete_key(key)get_all_keys()get_single_key(key)- missing-key-safe pattern: read with

get_single_key() before update_key(), create when absent

How Templates Map To Ability Types

| Template | Type | Key SDK Methods |

|---|

SendEmail | Skill | send_email(), speak(), resume_normal_flow() |

OpenHome-local | Skill | wait_for_complete_transcription(), text_to_text_response(), exec_local_command() |

openclaw-template | Skill | wait_for_complete_transcription(), exec_local_command(), speak() |

Background | Background Daemon | get_full_message_history(), session_tasks.sleep() |

Alarm | Skill + Daemon | read_file(), write_file(), send_interrupt_signal(), play_from_audio_file(), session_tasks.sleep() |

loop-template | Skill (long-running) | start_audio_recording(), stop_audio_recording(), get_audio_recording(), text_to_text_response() |

ReadWriteFile | Utility / IPC | check_if_file_exists(), read_file(), write_file(), delete_file() |

Context Storage | Utility / State | create_key(), update_key(), get_single_key(), get_all_keys(), delete_key() |

basic-template | Skill | speak(), user_response(), run_io_loop(), resume_normal_flow() |

api-template | Skill | user_response(), text_to_text_response(), resume_normal_flow() |

Critical Technical Rules

| Rule | Why |

|---|

Call resume_normal_flow() on every Skill exit path | Returns control to the main agent flow |

Do not use resume_normal_flow() inside daemon loops | Daemons are independent long-running tasks |

Use call(self, worker, background_daemon_mode) in background.py | Ensures daemon startup contract is correct |

Prefer session_tasks.sleep() over asyncio.sleep() | Ensures proper cleanup on session end |

| Treat JSON writes carefully | Default write_file mode appends and can corrupt JSON |

Use persistent .md files for ambient prompt context | Memory background injects user-level markdown into Agent prompt |

| Use key-value context storage for structured state | create_key/update_key/get_single_key reduce JSON file bookkeeping |

| Validate local command execution | Prevent unsafe/destructive operations |

What You Can Build From These

- voice-composed email flows

- local machine operator abilities

- OpenClaw orchestration flows

- background profilers, summarizers, and schedulers

- ambient capture and extraction assistants

These templates are the foundation layer. Start with the closest pattern, keep lifecycle rules strict, and then harden for production.